An ERP system is the single most important, and often most expensive, software application a business will use. Because of this, ERP systems encapsulate the most important business complexities and relationships.

They manage tightly connected data across inventory, procurement, orders, finance, warehousing, and more. For the people running these systems every day, that complexity becomes a constant operational burden: finding the right data, understanding how entities connect, and diagnosing issues when something looks wrong.

That work usually requires deep platform knowledge and a lot of manual investigation. It is one of the most consistent ERP pain points; there is no Datadog for ERP systems.

Enter Dossbot: AI that Solves Complex Business Problems

At DOSS, we knew building an AI chatbot has become commoditized; what matters is the tools it has access to and the data it can use. Therefore, Dossbot was built not as a chatbot bolted onto the side of the product, but as an agentic layer integrated into the DOSS platform. The goal is simple: let users describe a problem in plain language, and have the system navigate the complexity on their behalf.

At DOSSCON1 , we showed what this looks like in practice. We gave Dossbot a deliberately vague prompt: inspect a customer instance and find anything wrong with the SKU structure and its links to the BOM (Bill of Materials). No table names, column hints, or definition of “wrong.”

Dossbot explored the schema, identified relevant tables, traced SKU-BOM relationships, and surfaced circular BOM references, a failure mode that would normally take a human analyst hours to isolate.

That demo captured the core shift: users no longer need to know where to look or what query to write. They describe the problem; the agent does the traversal.

The path to that outcome started well before writing agent code. It started with platform architecture.

The Platform Did Most of the Hard Work

When we started building Dossbot, the key question was not “How do we connect an LLM to our data?”

It was: “How much of this problem has our architecture already solved?”

The answer: most of it.

DOSS is built on a unified, schema-driven architecture. Entities, relationships, and operations are defined through a single GraphQL type system. Those definitions flow through the stack via codegen , from database schema to backend API to frontend contracts. It is not a set of disconnected layers stitched together over time. It is one connected type system.

On top of that, DOSS has an RBAC model enforced at the GraphQL API layer and at the database layer. Every query and mutation passes through authentication and authorization checks, including:

- organization-level isolation

- table-level access control

- row-level security

- field-level visibility

- operation-level permissions

All data access runs through this single API surface.

That meant building Dossbot was primarily about orchestration and UX, not rebuilding data access, permissions, or business logic. The abstractions we needed for agents could plug directly into existing GraphQL and codegen infrastructure.

The thesis behind Dossbot is straightforward: a unified, schema-driven, permission-aware platform architecture makes AI agent integration a natural extension rather than a bolt-on. The engineering choices that make this work, from durable execution to tool abstraction to real-time events, are what turn that foundation into a production-grade and secure agent.

The Agent Loop

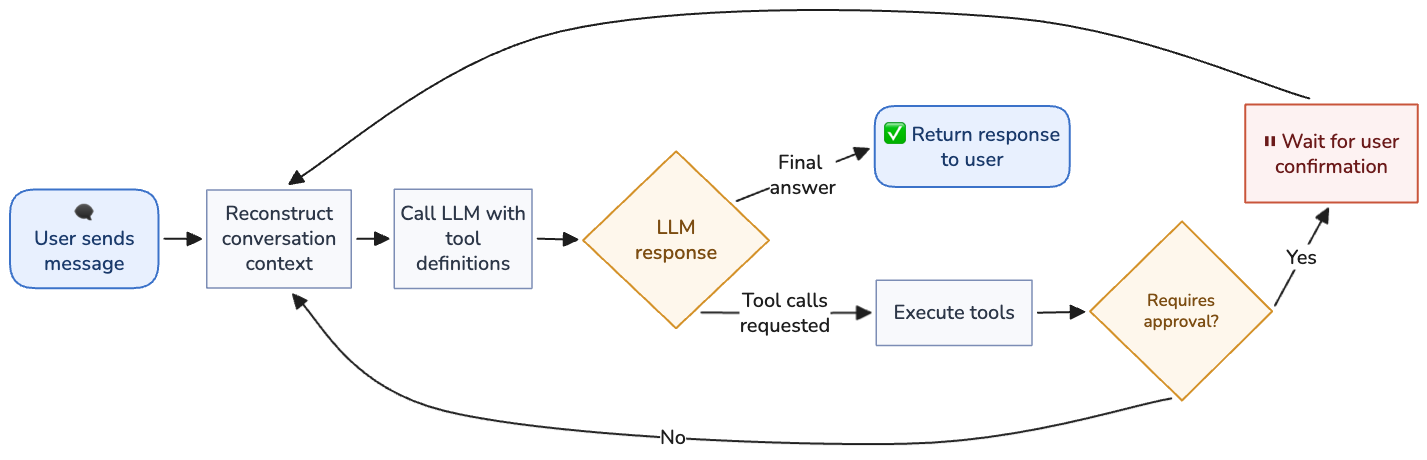

At the heart of Dossbot is the agent loop: an explicit, controlled cycle where each iteration of LLM generation and tool execution is fully observable and interruptible.

Each iteration follows the same pattern:

- Reconstruct conversation context from the database

- Call the LLM with available tools

- Persist the result

- Execute requested tools

- Decide whether to continue or stop

If the model requests tools, results are appended and the loop continues. If it returns a final answer, the run exits. A configurable max-step limit prevents runaway behavior.

This explicit loop design gives us safety, observability, and interruptibility. The loop can be paused while waiting for user approval on a tool call, or canceled entirely at any point. Each step executes as a durable activity with retry and persistence guarantees. And because every iteration is discrete and logged, we get a complete record of the agent's reasoning chain: not just the final answer, but every tool it invoked, every query it ran, and every intermediate conclusion it reached.

Tools as Abilities

Tools are how Dossbot acts on the platform. Instead of generating text only, the model can request concrete operations: inspect schema, query records, create data, update structures.

Each tool is defined declaratively with:

- a name

- a usage description

- input/output schemas

Tool definitions contain no business execution logic. They are contracts. The model produces schema-conforming JSON; execution happens separately through durable activities with retry/persistence semantics.

That separation keeps tools composable and auditable. Adding a tool is predictable: define schema, implement executor, register it. Tool building doesn’t require a deeper understanding of the Dossbot platform: it lowers the barrier to entry for any engineer or agent to contribute.

Query and Mutation Tools

Dossbot’s tools are split into two categories:

- Query tools: read operations (schemas, rows, workflows). Run immediately.

- Mutation tools: write operations (create/update records, schema changes). Require explicit user approval.

For mutations, Dossbot generates a confirmation payload explaining exactly what it plans to change, where, and with what values. The user can approve or reject. Rejection feedback is sent back into the loop so the model can revise.

That human-in-the-loop boundary is non-negotiable in a business-critical system: the basic pattern is free exploration, explicit consent for writes.

Prompt Engineering in Tool Descriptions

A lot of reliability comes from tool descriptions. The model needs more than “what this tool does.” It needs execution heuristics and prerequisites, like fetching table schema before querying rows to infer type structure correctly.

Better tool descriptions reduce loop count and improve first-pass correctness.

Security by Default

Every Dossbot tool calls our existing GraphQL API, authenticated with the user's own JWT token. No tool bypasses this layer. This is a strict, non-negotiable architectural requirement.

The alternative — giving the agent direct database access — would be faster to implement but fundamentally insecure. DOSS has a sophisticated RBAC model enforcing organization-level isolation, table-level access controls, row-level security, field-level visibility, and operation-level permissions. Bypassing the API layer would mean bypassing all of these protections, and the agent could see data the user has no permission to access.

By routing every tool call through the GraphQL API with the user's token, the agent inherits the full permission model for free. The principle is simple: the agent operates with exactly the same permissions as the user. No privilege escalation through AI, cross-tenant data leakage, or bypassing of business rules enforced at the API layer.

The user's JWT token flows through the entire execution chain, from the frontend, through the API, into the durable workflow, through each tool activity, and into the GraphQL client that executes the tool. At every step, the same authentication and authorization checks run as if the user were making the request directly.

Beyond RBAC, all workflow data is encrypted with AES-256 before being persisted to the durable execution engine. Dossbot conversations may contain sensitive business data, and the encryption ensures that only DOSS infrastructure can decrypt and read the actual content.

The Data Model

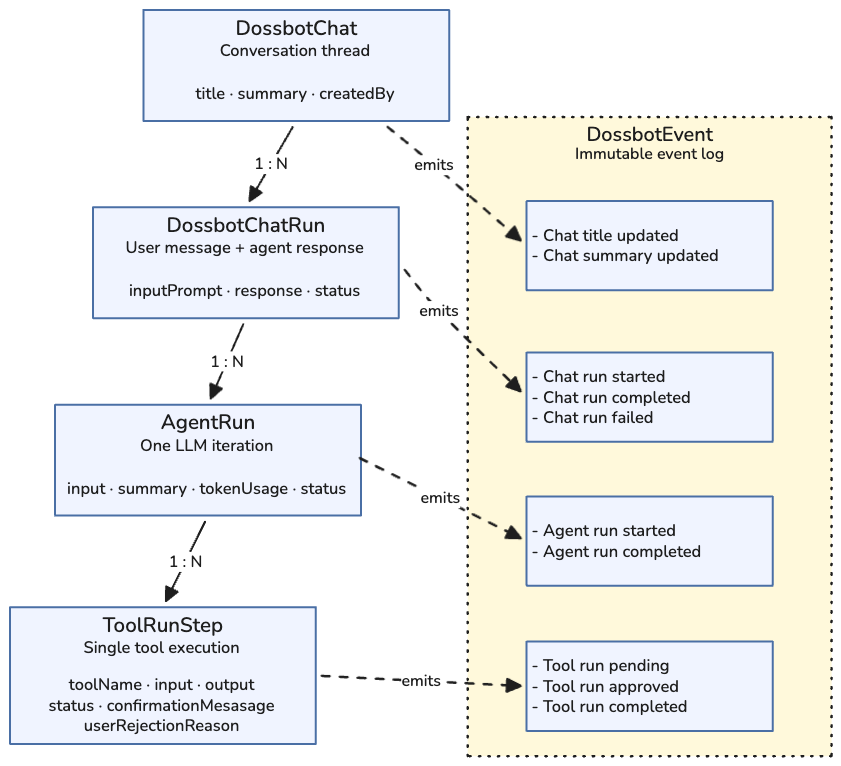

Dossbot's data model is designed around four levels of granularity, each with its own lifecycle and status tracking:

- DossbotChat represents a full conversation thread. It persists indefinitely and can be resumed at any time. Each chat has an auto-generated title and summary that update as the conversation progresses.

- DossbotChatRun maps one-to-one with a user message. When the user types a prompt and sends it, a new ChatRun is created. It tracks the input prompt, the agent's final response, and the run's status.

- AgentRun represents a single iteration of the agent loop, corresponding to one LLM API call. A ChatRun may have multiple AgentRuns as the agent loops through tool calls. Each AgentRun records the full message array sent to the LLM, the response, and token usage.

- ToolRunStep represents a single tool execution within an agent run. It records which tool was called, the parameters the LLM generated, the tool's result, and the execution status, including whether the user approved or rejected a mutation and their reasoning if they rejected.

Tying all four levels together is the immutable event log. Every state transition across every level is recorded as a DossbotEvent. There are dozens of event types covering chat run lifecycle, agent run lifecycle, tool run lifecycle, tool confirmations, and post-processing events like title and summary updates. Each event carries a type-specific payload with the relevant details.

This event log serves multiple purposes. It drives the real-time UI (described below), it provides a complete audit trail of every action the agent took, and it forms the foundation for offline evaluation, since every conversation is fully recorded and replayable.

Building LLM Context per Iteration

Every iteration reconstructs full context from persisted history: user messages, model outputs, tool calls, tool results, and system prompt state. System prompt context is augmented with current date/time in user timezone to interpret relative time queries correctly (“this month,” “last quarter,” etc.).

We also include page-level breadcrumb context from the DOSS UI (object type + object ID). That allows prompts like “explain what I’m looking at” without clarifying round-trips.

Schemas as Contracts

Schemas are the contract between model output and runtime execution.

When Dossbot requests a tool, the payload must validate against the tool’s input schema. This removes fragile string parsing and gives predictable structured execution.

We use Zod-based schemas for:

- compile-time typing

- runtime validation

- tight integration with our AI SDK stack

Tool schemas also encode operational constraints (for example, bounded row pagination to control context usage).

When the LLM generates JSON that doesn't match the expected schema, the system self-corrects. The tool invocation fails, the durable execution layer retries the activity, and if it continues to fail, the error message is sent back to the LLM as a tool result. The agent sees the error and generates a corrected tool call in the next iteration. This self-correcting loop is critical for reliability. LLMs are non-deterministic and occasionally produce malformed inputs, but the architecture handles this gracefully without requiring user intervention.

Some tools also include an input preprocessing step that catches and fixes known patterns of LLM input deviations before execution, preventing unnecessary retries for common mistakes.

Each revision pushes the system toward a deterministic execution flow, while keeping reasoning non-deterministic.

Durable Execution

LLM workflows fail differently than traditional API flows. They have more variable latency/error behavior, and often span many dependent calls. A single user request can involve schema inspection, data fetches, analysis, and write operations across services. Any step can fail.

Durable execution handles this by persisting workflow state at each step and resuming from the last durable boundary instead of restarting from scratch.

One Workflow Per Conversation

Each Dossbot conversation gets its own long-lived durable workflow instance. The workflow persists for the entire lifetime of the conversation, but a workflow that is simply waiting for the user's next message doesn't consume any compute resources. This means users can return to any past conversation and pick up exactly where they left off.

The workflow is signal-driven. Signals notify the workflow of new user messages, tool confirmations or rejections, cancellation requests, and post-processing triggers such as title and summary generation. The system reacts in real time without actively polling or consuming resources when nothing is happening.

Activities and Reliability

All operations (LLM calls, tool executions, database updates, event publishing) run as durable activities with automatic retry policies. Core activities retry up to three times on failure. Tool activities get a single attempt, because tool errors are more useful when fed back to the LLM for self-correction rather than blindly retried. Activities have timeouts and heartbeat signals to handle long-running operations gracefully.

Non-confirmation tool calls execute in parallel, improving throughput when the agent requests multiple tools in a single iteration. Mutation tools that require user approval pause the workflow until the user responds, consuming no resources while waiting.

When a user cancels a run mid-execution, the cancellation propagates through the entire execution chain. In-flight tool executions receive cancellation signals, which is especially important for mutation tools to ensure that unintended changes can be properly prevented.

Showing the Agent's Work in Real Time

Dossbot is intentionally transparent. Users see the run unfold step-by-step, not just a spinner and final answer.

Typical flow: thinking → schema fetch → data query → analysis → recommendation.

For mutations, a confirmation UI appears with the exact proposed changes, and the system does not allow progress until the user has explicitly allowed the change. ERPs are far too business-sensitive to dangerously bypass permissions!

The Event Pipeline

The real-time update pipeline flows from the durable execution layer to the user's browser:

- A durable activity executes and creates a DossbotEvent record in PostgreSQL

- A notification is published to a Redis pub/sub channel

- A GraphQL subscription picks up the notification over WebSocket

- The frontend receives the notification and queries the API for the full event details

- The UI updates

Notifications carry only the fact that an event happened, not the event details themselves. When the frontend receives a notification, it queries the API for the full data. Because event records are stored in PostgreSQL, a transactional data store, the data is always persisted in a consistent state. This means that even if the frontend disconnects and misses notifications, the full state can be reconstructed on reconnect by re-querying the database. There are no event ordering issues or missed events that could cause a permanently stale UI.

This transparency also builds trust. Users can see that the agent is working methodically, fetching context before querying, checking schemas before creating records. And if something goes wrong, the full event trail shows exactly where and why.

What's Next

AI agent systems are fundamentally different from traditional software when it comes to testing. The same prompt can produce different tool call sequences and different responses across runs. Today we monitor production behavior through error tracking, max-iteration alerts, and token usage tracking. The immutable event log captures everything needed for offline evaluation, and we are developing formal eval pipelines covering tool selection accuracy, input correctness, and end-to-end task completion.

Beyond evals, three areas are shaping the next phase of Dossbot:

- Memory: Remembering who the user is, what they use DOSS for, and their common workflows, so the agent becomes more personalized and efficient over time.

- Context management: Better handling of long agent-driven flows through compaction and summarization, preventing context window overflow while preserving the information the agent needs.

- Proactive agent flows: Moving beyond reactive Q&A to proactive assistance, where Dossbot surfaces issues, recommends optimizations, and suggests action items, with user approval remaining at the center of any changes to data.

The direction is clear: not chat for chat’s sake, but production agents that operate safely inside real operational systems.